Research conducted at Stanford Unversity by by Michal Kosinski and Yilun Wang showed that machine vision can infer sexual orientation with 81% accuracy in men, and 74% in women.

Over 130,741 images of 36,630 men and 170,360 images of 38,593 women downloaded from a popular American dating website were used in the research. “These features were entered into a logistic regression aimed at classifying sexual orientation,” reads the project’s description on the Open Science Framework.

“Given a single facial image, a classifier could correctly distinguish between gay and heterosexual men in 81% of cases, and in 74% of cases for women.”

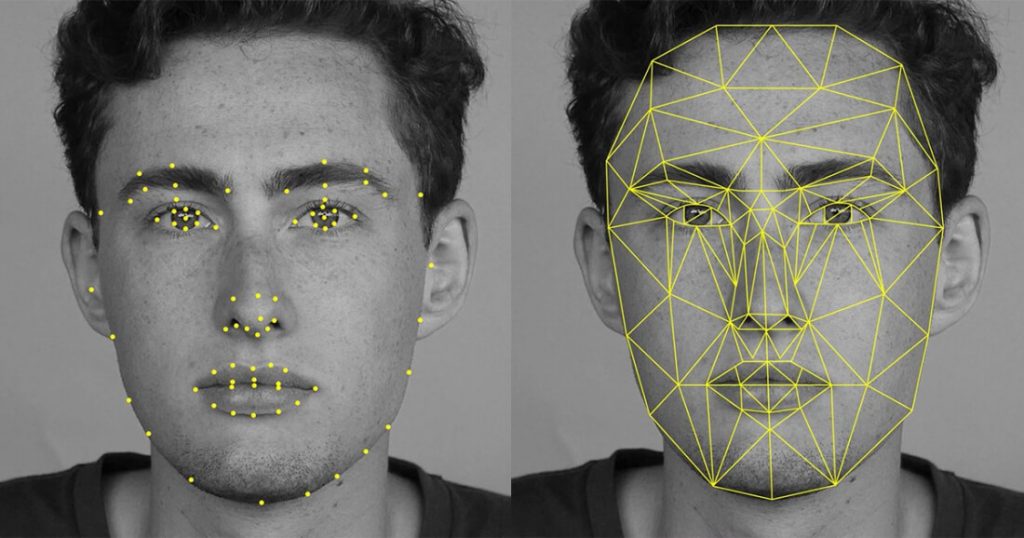

The images were fed into a software (called VGG-Face) which identifies and attributes each image with a numerical string, known as their ‘faceprint’, reports The Economist. Then a predictive model was employed to find correlations between the feature of the ‘faceprints’ and the acknowledged sexuality of the face’s owner.

When the resulting model was run on data which it had not seen before, it far outperformed humans at distinguishing between gay and straight faces.

When shown one random photo each of a gay and straight man, the model distinguished between them correctly 81% of the time. With additional pictures (5) of each man it distinguished sexuality correctly 91% of the time.

The model performed worse with women, telling gay and straight apart with only 71% accuracy after one photo, and 83% accuracy after five.

Researchers suggest the software works by picking up on subtle differences in facial structure. With the right data sets, reports The Economist, similar AI systems might be trained to spot other similar intimate traits such as cultural affilitions or political views.

The newly published findings have obvious worrying longterm implications, particularly in countries with high levels of anti-gay sentiment.

Details of the research is soon to be published in the Journal of Personality and Social Psychology.

© 2017 GCN (Gay Community News). All rights reserved.